There was a good discussion on twitter on memory overcommit and the value of memory overcommit and whether you should or should not use it in production. What struck me in this was that on a subject like this, there is so much misunderstanding although there is a lot of documentation available that can explain the subtle difference between good and bad overcommit of memory.

Memory overcommit, the basics.

In short: When you assign more RAM to your VMs than available in your host.

Good memory overcommit: When you assign more RAM to your VMs than available in your host BUT never cross the line where the amount of RAM that is USED by your VMs is more than available in your host.

Bad memory overcommit: When you assign more RAM to your VMs than available in your host AND cross the line where the amount of RAM that is USED by your VMs is more than available in your host.

A simple example:

Host has 48GB of RAM and just for the sake of argument we’ll pretend the hypervisor doesn’t use any RAM and we don’t have memory overhead per VM. I now start loading it with VMs that have 4GB RAM assigned. Without any memory overcommit I can load this host with 12 VMs of each 4GB.

Now let’s say, these VMs normally use only 2.5GB of RAM but sometimes they peak to 4GB. With memory overcommit I could now load the host with 19 VMs of 4GB RAM assigning a total of 76GB RAM and demanding 19 x 2.5GB = 47.5GB of physical memory. Even to me this is a bit on the edge, so I’d reserve some RAM for spikes and would go back to 17 VMs, which would leave me with 17 x 2.5GB = 42.5GB of actively used physical RAM, 17 x 4GB = 68GB of RAM assigned and therefore 68GB-48GB = 20GB of overcommitted RAM. So, 20GB of RAM I didn’t have to pay for. This is a good use of memory overcommit.

Bad use of memory overcommit is when in the previous example I would place more VMs on this host, to the point where the use of physical RAM is higher than the amount of physical RAM present in the host. ESX will start some memory optimization and reclaim techniques, but in the end it will swap host memory to disk, which is bad. It is essential to carefully monitor your hosts to see if you’re moving from good memory overcommit to bad memory overcommit.

Misconceptions on memory overcommit

– Transparent Page Sharing (TPS) is a performance hit

– Overcommit is always a performance hit

– Real world workloads don’t benefit

– The gain by overcommitment is neglectable

– Overcommitment is dangerous

Transparent Page Sharing (TPS) is a performance hit

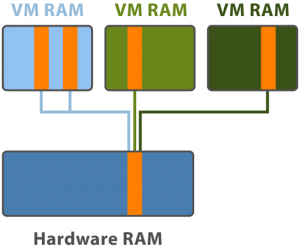

The mechanism below memory overcommit is called Transparent Page Sharing (TPS). Using this technique ESX scans if pages in memory for one VM are identical to pages of another VM by using hash values and if they are ESX doesn’t store that second page in physical memory but just places a link to the first page. When the VM wants to write to that page, then the link is removed and a real page in physical memory is created. This technique called Copy-on-Write (CoW) will incur an overhead compared to writing to non-shared pages.

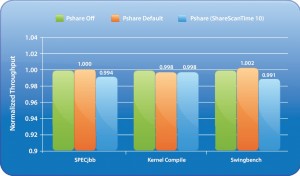

The scanning of the guests performed by the hypervisor is done at base scan rate specified by the “Mem.ShareScanTime” which specifies the desired time to scan the VMs entire guest memory. By default ESX will scan every 60 minutes, but depending on the amount of current shared pages, ESX can intelligently adjust this scan frequency.

When performance is measured between a system with no page sharing, default page sharing or excessive page sharing (forced by the test), it shows that default page sharing performs 0,2% slower and sometimes 0,2% faster than without page sharing. Page sharing sometimes improves performance because the VM’s host memory footprint is reduced so that it fits the processor cache better.

Overcommit is always a performance hit

To be short, no it is not. But you probably expect more from me than just this. Let’s be clear about doing bad overcommit which is always a performance hit. As soon as your ESX hosts is out of physical RAM and starts swapping to disk, there is a big performance hit which should be avoided at all costs. Scott Drummonds (VMware) recently wrote about using Solid State Disks in your SAN to be used as swap space for your ESX host (http://vpivot.com/2009/12/24/solid-state-disks-and-host-swapping/), which would make less of an issue but still there is a performance hit.

But with good overcommit the performance hit is very low as you read in the previous section. There is also a fase in between good and bad overcommit and that is when your good overcommitted host is getting low on free physical memory. When this situation occurs, ESX will start looking for unused but claimed memory by the VMs and will try to reclaim memory by using a technique called ballooning.

The balloon driver inside the Guest OS is triggered by ESX to try and claim free pages by demanding memory (inflating) from the Guest OS. The Guest OS will then use page reclaiming algorithms to determine which pages can be assigned to the balloon driver. First the OS will assign pages marked as free and if this is not enough the OS will move less important pages to the OS swap file to free up pages and assign these to the balloon driver. Next the balloon driver tells ESX which pages it has received and since the balloon driver will not actually use them, ESX can safely re-use these pages for other VMs.

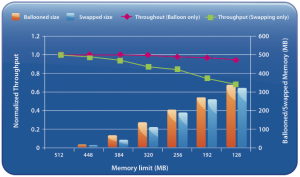

As with TPS, there is a performance penalty for this ballooning, but again the penalty is very low. In the graph below you can see that even with reclaiming 3/4 of the VMs memory, the performance penalty is only 3%. Looking at the performance impact of swapping host memory, we see that the throughput loss is about 34% which is a huge difference compared to the 3% for ballooning.

FIRST CONCLUSION: No performance hits !!!

The conclusion to draw from this, is that the performance impact of Transparent Page Sharing and ballooning neglectable and memory overcommit is NOT a performance hit !!!

Let’s move on to the remaining misconceptions on memory overcommit.

Real world workloads don’t benefit.

A common misconception is that TPS and ballooning will not work for the majority of applications. I have done a lot of searching for problems with applications in a virtual environment that could lead back to TPS or ballooning but could only find two situations in which there is a “problem” with TPS or ballooning. One situation is when running a Java application.

Copied from Scott Drummonds website:

“Java provides a special challenge in virtual environments due to the JVM’s introduction of a third level of memory management. The balloon driver draws memory from the virtual machine without impacting throughput because the guest OS efficiently claims pages that its processes are not using. But in the case of Java, the guest OS is unaware of how the JVM is using memory and is forced to select memory pages as arbitrarily and inefficiently as ESX’s swap routine. Neither ESX nor the guest OS can efficiently take memory from the JVM without significantly degrading performance. Memory in Java is managed inside the JVM and efforts by the host or guest to remove pages will both degrade Java applications’ performance. In these environments it is wise to manually set the JVM’s heap size and specify memory reservations for the virtual machine in ESX to account for the JVM, OS, and heap.“

The second exception I found is not really a problem but only something to keep in mind when working with the newer type of CPU’s that can do Virtualized MMU. Read this post from Duncan at Yellow-Bricks: “Virtualized MMU and Transparent page sharing” http://www.yellow-bricks.com/2009/03/06/virtualized-mmu-and-tp/. In this post Duncan explains that when using large pages (2MB) in combination with a CPU that uses virtualized MMU “the ESX page sharing technique might shares less memory when large pages are used instead of small pages“ and “When free machine memory is low and before swapping happens, the ESX Server kernel attempts to share identical small pages even if they are parts of large pages. As a result, the candidate large pages on the host machine are broken into small pages. This can degrade performance at a point in time when you don’t want performance degradation. Keep this in mind when deploying VMs with virtualized MMU enabled, make your decision based on these facts! Do performance testing on what effect overcommiting will have on your environment when virtualized MMU is enabled.“

Second Conclusion: Workloads being not compatible with TPS or ballooning:

– Java is the only known application that can have a performance hit when ballooning kicks in.

– When using V-MMU compatible CPU’s in combination with large pages a performance degradation can be expected when ballooning kicks in.

The memory gain by TPS is neglectable

How much memory would you expect to gain from using TPS? Keep in mind that TPS works at page level, not only between VMs with the same Guest OS, but across all Guest OSes. Not just over VMs but also within the same VM. You could theoretically already save memory through TPS with just one VM running on your host.

Looking at real world examples provided me by a number of people running ESX in production, it is clear that TPS saves a lot of memory. See the table below:

| Physical RAM (MB) | Saving (MB) |

| 40,958 | 36,281 |

| 32,767 | 11,579 |

| 49,142 | 13,208 |

| 49,142 | 7,673 |

| 131,069 | 46,086 |

| 131,069 | 53,788 |

| 65,534 | 28,713 |

| 32,767 | 21,968 |

| 32,767 | 8,725 |

| 24,575 | 11,081 |

| 24,575 | 9,096 |

| 16,215 | 11,434 |

| Totals:Â Â 630,580 | 259,632 |

Third conclusion: Memory savings by TPS:

– As the table shows, memory savings thanks to TPS can be really huge and the price of memory saved by TPS is easily worth the extra buck for ESX licenses.

Overcommitment is dangerous

In the paragraphs above I think I made clear that there is no reason to NOT use TPS unless for specific applications. The only reason left for some people to not use TPS is that if you just keep on filling your host with VMs, it will soon start swapping (which is the bad form of overcommit) and they’re absolutely right. But may I ask this question: “Don’t you monitor what you are doing?”.

When I have a one liter bottle and I want to fill it with water, while filling it I usually keep an eye on (monitor) how much water I already pored into it and stop just before it’s full. I think this same practice can be used in many places, even in IT infrastructures.

Number of users on your Citrix hosts, I bet you monitor performance and start adding more hosts when certain limits will be reached. Or adding data to your disks, I bet you daily check to see if your disks aren’t near their limit. Creating snapshots of VMs in your virtual infrastructure, check your datastore if these snapshots aren’t filling up your space and please, do tell me you keep a sharp eye on those thin provisioned disk. Now, if you do all that and consider those techniques as save, I can’t see why overcommitment would be dangerous. Just monitor !!!

Fourth conclusion: Danger of overcommitting

– There is no danger, no hidden risks, just as long as you monitor what you are doing.

I would to thank my twitter friends how promptly responded with esxtop screenshots when I called out for help on twitter. Thanks guys.

And a big thank you to Duncan and Scott for providing great posts which helped me put together this post. Thanks.

Sources:

| Memory Resource Management in VMware ESX Server by Carl A. Waldspurger | http://www.waldspurger.org/carl/papers/esx-mem-osdi02.pdf |

| Virtualized MMU and Transparent page sharing by Duncan Epping | http://www.yellow-bricks.com/2009/03/06/virtualized-mmu-and-tp/ |

| VMware communities | http://communities.vmware.com/message/251948 |

| Understanding Memory Resource Management in VMware ESX Server | http://www.vmware.com/resources/techresources/10062 |

| ESX Memory Management: Ballooning Rules | http://vpivot.com/2009/09/25/esx-memory-management-ballooning-rules/#more-10 |

| Solid State Disks and Host Swapping by Scott Drummonds | http://vpivot.com/2009/12/24/solid-state-disks-and-host-swapping/ |

| Large Page Performance | http://www.vmware.com/files/pdf/large_pg_performance.pdf |

| Memory Overcommitment in the Real World | http://blogs.vmware.com/virtualreality/2008/03/memory-overcomm.html |

Some of my real world data:

Physical: 32GB Used: 22.3GB Saving: 8536MB VMs: 11

Physical: 64GB Used: 31.8GB Saving: 3443MB VMs: 22

Physical: 64GB Used: 55.1GB Saving: 3491MB VMs: 24

Physical: 64GB Used: 47.2GB Saving: 3824MB VMs: 29

Physical: 64GB Used: 55.7GB Saving: 3566MB VMs: 24

Physical: 24GB Used: 19.1GB Saving: 3MB VMs: 12

Physical: 24GB Used: 22.3GB Saving: 2MB VMs: 10

Physical: 24GB Used: 21.3GB Saving: 2MB VMs: 12

Physical: 24GB Used: 21.6GB Saving: 0MB VMs: 14

Total: 384GB Saving: 22.6GB (~5% ?)

I don't turn it off, but it doesn't seem to provide any real benefit with the majority of my apps.

Which stats are you using to get these numbers? The data I use is from esxtop. When you start esxtop, press m (memory) then watch the first line for overcommit ratio ( 0.0 or 0.2 = 20% or 0.7 = 70%).

The row PSHARE tells you the ammount of savings and ammount used.

Thx for commenting.

Gabrie

Nice piece of work Gabe, it always pays to explore this sort of question with rationality rather than religous zeal. I'm not sure about 'YES YES YES' though, more like 'In most cases yes, but you always need to plan carefully and monitor closely'

Simon Bramfitt

I more or less agree but some of the “details” don't seem to add up.

I think your example is poorly chosen for the “bad overcommit scenario” as active memory actually doesn't see anything about the amount of already deduped pages. In other words you can easily have 20 VMs with 2.5GB of active memory on a 48GB host if they deduped 15GB due to similarities in the OS. (looking at the numbers you provide there's an average saving of 21GB)

Also the part about ballooning seems to be incorrect. The balloon driver doesn't force free pages to be reclaimed but forces the OS to use it's algorithms to reclaim pages which are less important or less used. It's seems you are mixing “idle memory tax” with the balloon driver.

Like I mentioned on Twitter: Best practice for SAP on VMware is not to overcommit memory. Currently best practice for SAP on vSphere is to not size the VM greater than a NUMA node.

For more info see: http://www.vmware.com/files/pdf/perf_vsphere_sa…

And to find out how much you save on physical memory with TPS, here is a nice formula:

[math]::round(((Get-View class=â€hiddenSpellError†pre=â€Get-View “>-ViewType â€>HostSystem |?{$_.Runtime.ConnectionState -eq “connectedâ€} | %{((((./Get-Stat2.ps1 -entity $_ -stat “mem.shared.average†-Interval RT) | measure-object -Property value -Average).Average) – ((((./Get-Stat2.ps1 -entity $_ -stat “mem.sharedcommon.averageâ€-Interval RT) | measure-object -Property value -Average)).Average))}) | Measure-Object -Sum).Sum /1Mb,2)

Credit goes to NiTRo at http://www.hypervisor.fr/?p=1282

Cheers,

Didier

Awesome post. Thanks for sharing!

Changed 2 sections in my post to be technically more correct. Thanks for the comment.

This is full of assumptions: that the VM's behave the same way, that each VM needs memory from 2,5 to 4 GB and that the VM's do not use up the memory all at the same time.

I agree that memory overcommit is a great feature and people shouldn't be afraid of it. What frustrates me is seeing other hypervisor vendors say in one breath that memory overcommit is “dangerous” and then in the next breath say “we're going to add this feature in the future.” Does it suddenly become not dangerous when it is in your product? You can't have it both ways.

The other thing that you might want to mention is the power of TPS on truly identical workloads, such as in the case of VDI. Greater memory sharing means better VDI density at a lower cost. I can't see running VDI workloads on any other hypervisor because of this reason.

Nice post Gabe, I enjoyed reading it.

No, this is not full of assumptions. It is an example to show the difference between good and bad overcommit. In the example I want to make clear when it is ok to overcommit and when it is bad to overcommit.

The difference in 4GB and 2,5GB is purely to show that if your VMs are not using all of their assigned RAM but only at some peek moments, you can re-use that unused RAM for other VMs. That is the big difference with other hypervisors, where a 4GB RAM VM will always use 4GB of physical RAM, no matter how much the Guest OS is really using.

It was a simplified example to explain the theory.

Although in the last section, you can see real world examples on how much saving is obtained using TPS. This is data gathered from ESX hosts (3.5/4.0) running in production supplied by a number of people I know through twitter.

If you have more questions, feel free to mail me.

Regards

Gabrie

Getting the total/used from the vsphere client, getting the “Saving” from esxtop

Some real data, from a customer cluster, in full production. I looked at 4 hosts from 10 available:

Host 1 – 16 VMs – 12GB Shared – 30 GB Used – 64GB Total

Host 2 – 14 VMs – 11GB Shared – 30 GB Used – 64GB Total

Host 3 – 15 VMs – 10GB Shared – 32 GB Used – 64GB Total

Host 4 – 25 VMs – 30GB Shared – 32 GB Used – 64GB Total

Yes, I have a host right now, with 30GB Shared (I looked many times, and confirmed with the performance data on vCenter to be sure).

These are 99% Windows Server 2003 VMs, which explains the huge amount of page sharing.

Hosts are still with too much available memory, but would probably be near the limits without TPS. Also, MEMCTL is zero on all cases, ballooning did not even kicked in. I can *easily* double the VM density on this situation, since the memctl driver would save even more memory.

Data from real world, better than any marketing blah blah… Thx Fernando!

First great post, thank you for it. When I thought about good over commitment, first thing that came to mind was mixing work load, which will definitely give more opportunity for memory over commitment.

Great explanation Gabe. Thanks.