Most customers I work with use FC connections to their EMC storage we’ve sold them, but today I was with a customer who has a complete DELL setup and used iSCSI to connect to their Dell Equallogic PS4000E storage over a set of Dell PowerConnect switches. I was asked to configure the dependent hardware iSCSI nics.

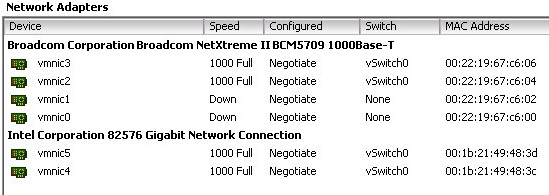

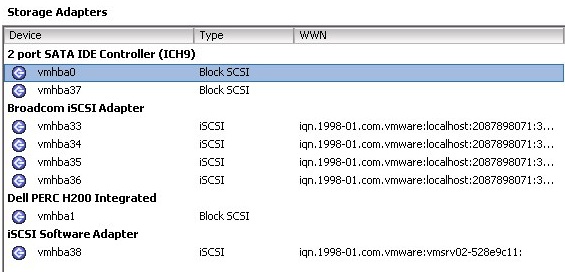

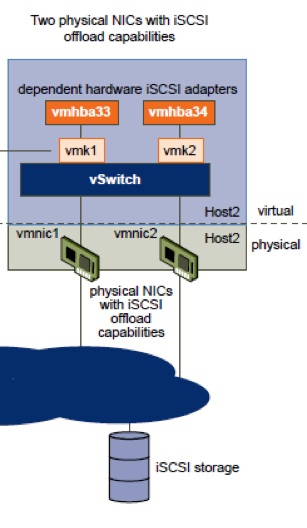

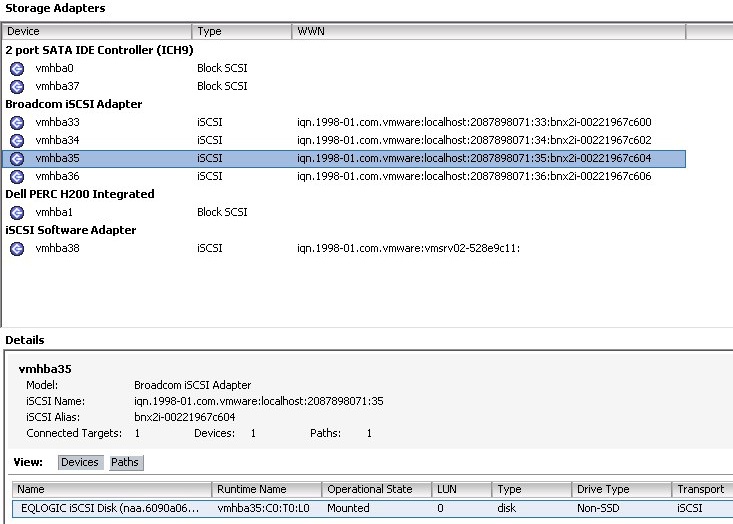

The Dell PowerEdge R610 hosts had 2 Intel 82576 nics and 4 Broadcom BCM5709 nics. These BCM5709 nics offer 1Gbit networking but also dependent hardware iSCSI. A depedent hardware iSCSI adapter depends on VMware networking and iSCSI interfaces provided by VMware. You’ll notice that a dependent hardware iSCSI adapter presents it self in the ESXi configuration section under both “Network Adapters” and as a vmhba under “Storage Adapters”.

To configure the adapters for iSCSI we’ll first have to make sure which vmhba adapter is connected to what interface. Easiest is to right click the vmhba adapter and open the properties tab. Now you’ll the the mac address of this vmhba: bnx2i-00221967c604. The last part is the mac address. Next check in the network adapters list to what vmnic this is linked, in this example vmnic2. Check your network drawing and determine the IP address you want to connect to this iSCSI interface.

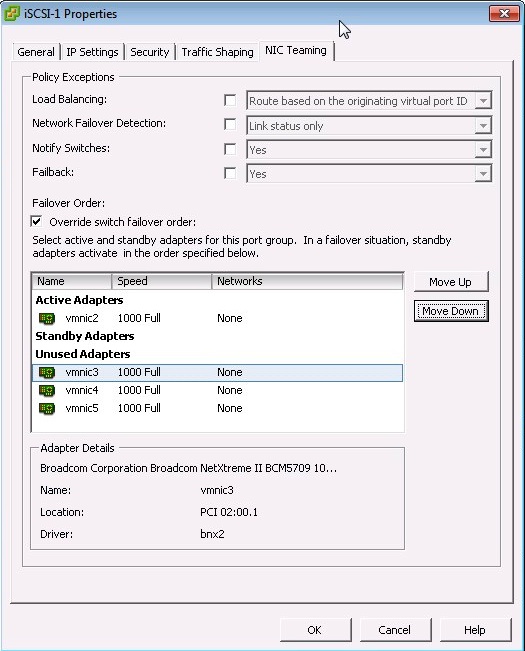

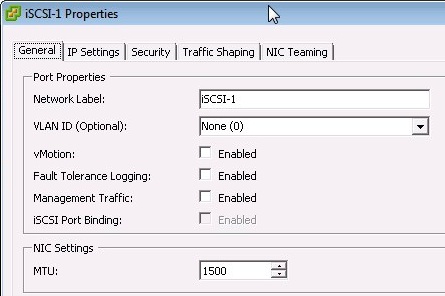

We’ll now create a vmkernel interface with just one vmnic connected to it. This is very important !! Where you would normally configure standby adapters, you now only add one adapter and set all other adapters to “unused”. In this specific configuration that meant setting vmnic3, vmnic4 and vmnic5 to unused and only add vmnic2 as active.

You can either use one vSwitch for all portgroups or use two vSwitches and assign one iSCSI vmkernel interface to each vSwitch. Important when using two vSwitches is that you MUST use different IP ranges on each iSCSI vmkernel interface.

- Right click the vSwitch, choose properties.

- Add the vmnic if this has not already been done.

- Edit the properties of the vSwitch and make sure you set vminc2 to unused

- Click Add and create a new vmkernel portgroup

- Name it iSCSI-1

- Enter the IP address

- Click finish

The newly created vmkernel interface shows in the vSwitch.

If your configuration (network switches / storage switches and storage interface) support Jumbo frames, you can set the MTU size on the vmkernel portgroup to 9000.

Attention: According to “Dell PowerConnect and Jumbo Frames” the Dell PowerConnect requires an MTU of 9216.

Attention: Jumbo frames are NOT supported for the BCM5709 cards in ESXi4, but there IS support for Jumbo frames on the BCM5709 cards with ESXi5 according to KB1025644.

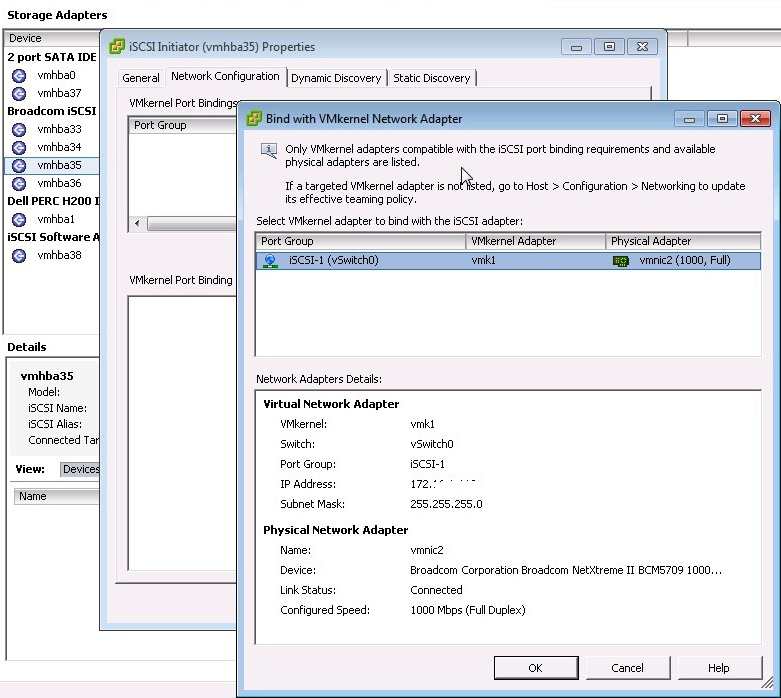

After configuring the vmkernel interface we now need to attach the vmkernel interface to the vmhba. Switch to the Storage Adapters section and select the vmhba that correspondents to the vmnic you just configured the vmkernel interface for. Select properties and go to the second tab “Network configuration”. Now click “ADD” to connect the vmkernel interface.

Go to the next tab “Dynamic configuration” and enter the IP address of your storage you need to connect to or if needed in your case enter a static discovery on the next tab. On the first tab you can set CHAP authentication if needed. After pressing OK and a rescan of the vmhba, you should now see the LUN(s) that have been presented to your host.

To connect the second vmhba, you should follow the same steps again. Create vmkernel portgroup iSCSI-2 and now make vmnic3 (or other vmnic in your case) the only active adapter. Next add it to corresponding vmhba. Of course you want to test if iSCSI failover works after you’re done configuring. The easiest test is to just shutdown the port on the physical switch connected to the first vmhba and then see if the failover works.

Using Jumbo frames with the Broadcom NICs as hardware iSCSI HBA was not supported in the past and I don’t think its been fixed yet. Did you test the configuration you outlined here with Jumbo frames all the way through? (vmk, vswitch, switch, etc)

Hi,

According to KB1025644 Jumbo frames ARE supported in ESXi5 but NOT in ESXi4. My post is about ESXi5, but I’ll update it and at this extra information.

Thank you for the reply.

Gabrie

Why do I have to two use different IP ranges (subnets?) on each iSCSI vmkernel interface? What will happen if not?

According to the vSphere SAN configuration guide: “If you use separate vSphere switches, you must connect them to different IP subnets. Otherwise,

VMkernel adapters might experience connectivity problems and the host will fail to discover iSCSI LUNs.”

Wouldn’t software initiator be better for performance because we can perform load balancing using software whereas hba can’t? if i remember correctly that was the case in ESXI4/4.1

Perhaps that was a typo in the article. Right now, it says there’s no jumbo support for both 4 and 5, and the last edit date of the article is today.

This is similar to what is presented with the PE R510’s as well. I have a pair of them connected up to my EqualLogic group. For the sake of simplicity and consistency, why not just use SW iSCSI across all NICs? I’ve read several ‘adventures’ with trying to get the Broadcoms and their respective TOE to work with iSCSI. Didn’t sound like fun. What are your thoughts?

Thx for your configuration post.

I’m trying to configure iSCSI dependant Hardware with NC382T also reported on kb KB1025644 TOE (/TSO) and configuration is ok. Jumbo frame configuration is allowed.

Finally, ISCSI device/path discovery don’t work.

In KB1025644, we can read this information for dependent Hardware iSCSI configuration :

With ESX/ESXi 4.x and 5.0these network cards configured as dependent Hardware iSCSI initiators do not support Jumbo Frames (MTU 9000) or IPv6 while they are supported when the network cards configured as software iSCSI initiators.