Last week I was asked by a customer to build a new VMware vSphere 5 infrastructure in which they would like to use Auto Deploy for the ESXi 5 hosts. Of course I told them that Auto Deploy was a first release but I didn’t push that too hard, because designing and deploying it for real production environments is also a challenge I hadn’t been able to take up yet. The environment consists of two sites; each site has an EMC VNX 5300 storage array which replicates to the partner. Per site we have 2 Cisco UCS blades in which 10 blades have been assigned for the VMware vSphere 5 environment. Each blade will use the converged FCoE adapter and will be presented to the ESXi hosts as two physical nics and two physical HBAs.

My series on VMware vSphere 5 Auto Deploy:

vSphere 5 – How to run ESXi stateless with vSphere Auto Deploy

vSphere 5 Auto Deploy PXE booting through Cisco ASA firewall

Updating your ESXi host using VMware vSphere 5 Auto deploy

My first Auto Deploy design for real production environment

vCenter and ESXi host configuration

The vCenter design also includes a VMware Site Recovery Manager 5 design, should Site-A fail we can quickly fail over to site-B using VMware SRM and vice versa. vCenter is deployed as a virtual machine and the Auto Deploy service will be running on the vCenter Server.

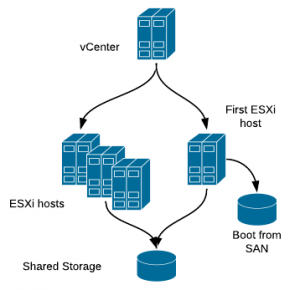

Should site-A fail because of power failure it would be impossible to get the ESXi host up and running again, since the vCenter VM would be down, including the Auto Deploy services. If the vCenter VM would be running in a cross-site design (vCenter for site-A running on ESXi hosts on site-B), the ESXi would be able to boot if power is restored to site-A because they would boot using vCenter and Auto Deploy from Site-B. However, for a VMware SRM design this would give me problems since losing Site-A would also lose vCenter-B, which I need to set the wheels of a VMware SRM site recovery in motion. To make a quick recovery possible after all ESXi hosts went down, I decided to have the first ESXi host in the blade center use boot from SAN and install ESXi on the “local” boot from SAN disk of 5 GB. The vCenter VM, together with the SQL VM, will always run on this first ESXi host.

Network

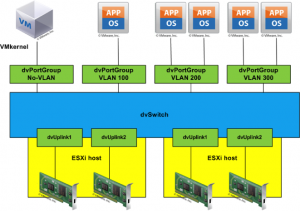

Between the two sites the management VLAN that I will be using is a layer2 stretched VLAN. On this VLAN the customer has a clustered DHCP solution. My first thought was to give each blade a separate physical nic for Auto Deploy because during the boot process only the native VLAN can be used. Since the customer didn’t use the native VLAN for other uses, like switch monitoring, I was free to redirect the native VLAN to the management VLAN. In other words, on the physical switch they created some trunk ports to the ESXi hosts in which the management VLAN was passed as if it was there native VLAN. The network design is based on the distributed vSwitch.

DHCP configuration

Since the customer isn’t that experienced yet using Auto Deploy, I wanted them to have to do as little management as possible on the PowerShell command line. Therefore I created a ruleset that included a large range of IP addresses which enables them to assign new blades by only changing a DHCP entry.

At the start of this project there a 10 blades on each site but since the whole IP ranges was mine to use, I reserved 20 IP addresses on each site and used these in the PowerShell rules. The following is the code I used to create the rule:

New-DeployRule –Name “Site-A-Rule” –Item “ESXi-5.0.0-20111204001-standard”, “Site-A”, “Site-A-Basic” –Pattern “ipv4=192.168.0.100-192.168.0.120”

The above code creates a rule named “Site-A-Rule” that will add the Auto Deploy hosts to cluster “Site-A”, using host profile “Site-A-Basic” if they meet the requirement of their IPv4 being between 192.168.0.100 and 192.168.0.120.

If they decide to add an extra blade to their current environment, all they need to do is add a new DHCP reservation based on the Mac address in the DHCP server and the new blade will be added to the cluster.

DHCP entries:

- Per host a reservation based on Mac address

- Use the required DNS name in the reservation for the ESXi host

- The following DHCP records are needed for the ESXi host to find the PXE boot server:

- 066 – Boot server host name: <ip of TFTP / PXE boot server>

- 067 – Boot file name: undionly.kpxe.vmw-hardwired

Host configuration and host profiles

This was a bit of a painful experience. Where I would have expected most problems with the Auto Deploy part during implementation, it turned out the host profiles are the real problem.

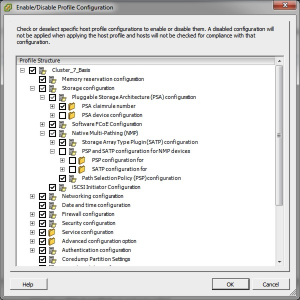

I have created two profiles per site. The first profile is used for the host that boots from SAN and the other profile is for the remaining hosts on that site. I did this because of an issue with compliance failures as described in KB 2002488. When using creating a separate profile for the host that boots from SAN, the KB work around can be avoided. When using the work around you would also lose the ability to control the Path Policy (PSP) settings.

Another important KB is KB 2001994, which lists a few options that cannot be used in host profiles. To me, the following options are the most important ones:

- ScratchConfig.ConfiguredScratchLocation

- ScratchConfig.CurrentScratchLocation

- Syslog.Local.DatastorePath

I’ve not yet found a good replacement for the scratch location. When checking the scratch location on a host, it is by default set to /tmp/scratch. Reading KB1033696 I learned:

“It is recommended, but not required, that ESXi have a persistent scratch location available for storing temporary data including logs, diagnostic information and system swap.” Since logs and diagnostic data are redirected to the vCenter server, only temporary data is stored in the scratch location. And since Auto Deploy host are seldomly updated using Update Manager, my guess is that the scratch location will be rarely used. Therefore I decided to leave the scratch location to the default /tmp/scratch.

Not having the Syslog.Local.DatastorePath isn’t such a big deal since you can use a syslog server, which is also included in vCenter 5:

– Syslog.global.loghost: udp://192.168.0.50:514

The diagnostics data is redirected to vCenter using the Network Coredump setting in the host profile. This can be found at:

– Networking Configuration – Network Coredump settings – Fixed Network Coredump policy.

To configure this for the reference host I used the following commandline commands:

esxcli system coredump network set –interface-name vmk0 –server-ipv4 194.104.240.212 –server-port 6500

esxcli system coredump network set –enable true

Problems with host profiles

During configuration of this environment I ran in to quite some issues with host profiles. A quick list of minor and major issues:

Time intensive applying of changes

When making a change in the reference profile and then changing all the other hosts takes a lot of time. In the beginning there were no VMs yet, so I could put 9 hosts into maintenance mode at the same time and apply the new profile to all the hosts ath the same time.

However, for each host again you are presented a full list of all the changes to be applied and have to click “finish” for each host again. To me the summary screen isn’t really needed because the same info you get when checking the host to see if it is compliant. Even better would be if I could select all hosts and have vCenter make the compliant automatigically by placing them into maintenance mode one by one and applying the profile.

Profile configuration changes lost

When updating a profile from the reference host, the “Enabled / disable profile configuration” settings are lost. These settings you might want to use because of KB2002488 “Applying a host profile causes compliance failure”.

Network changes causes hosts to not reconnect

Changing the network configuration for a host often results in the host not reconnecting after a reboot. This mostly happens when the host has to switch to a distributed vSwitch. Resetting the network configuration using the DCUI for that host solves it.

Show differences

When I check a host for compliance and it turns out it is not compliant, I do see a list of the areas in which the host is not compliant, but it doesn’t show me WHY it isn’t compliant. When I need 10 changes to make it compliant that is no issue, but for just one tiny change, it would be great to see what exactly the difference is. Maybe I can just correct it manually and save myself the trouble of putting the host in maintenance mode, applying the profile and exiting maintenance mode.

Setting root password

When configuring a root password, it seems that host profiles don’t check for the correct password or at least don’t check the password setting when checking for compliancy. It also looks as if the “set fixed password” option is lost when updating the profile from the reference host.

Updating from reference host

When updating your current profile from the reference host, it would be nice if you could compare the changes. This would probably trigger you on the fact that some settings will be lost or have to be set again, like the fixed password.

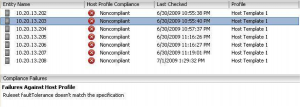

“DNS configuration doesn’t match the specification”

Often, when re-applying a number of settings at once, I still get an incompliance error: “DNS configuration doesn’t match the specification”. After applying the profile for a second time, this error disappears.

Ruleset FaultTolerance doesn’t match the specification

This is an error that sometimes appears and often disappears after setting a host into maintenance mode and exiting from maintenance mode, without having to re-apply the profile.

Conclusion

If your customer is very critical and wants everything to run smooth 100% of the time, you’d better stay away from Auto Deploy at this moment because the host profiles will give you some unpredicted results and sometimes some issues that are only solved by some more manual intervention. However, this never causes any production problems, its just that sometimes it takes a bit longer and more effort to apply the changes you wanted to apply and this is always done on hosts while in maintenance mode. But then again, once stuff is running smooth, you will hardly every change your ESXi host configuration.

If I was an admin for a larger environment, I wouldn’t mind a little extra manual labor especially since even with these extra interventions, you’ll probably still have LESS work deploying and upgrading hosts using Auto Deploy than in earlier versions.

Update

In the comment section Tom Fotja posted a question on which I want to respond in the post itself, because it adds to the design. The question from Tom was: “You say that the vCenter is always on the first blade. What if you do a maintenance of the first blade? You have to vMotion vCenter away. And then you end up with not very resilient set up. I think management cluster with statefull booting is a must for autodeploy.”

Yes, when performing maintenance on the fist host, I vMotion the vCenter and SQL VM to the second host in the cluster. And yes, there is less resillience for vCenter then because the second host is not running on local storage. The consequence would be that if now the whole site fails, I would have trouble getting all my hosts running again since they rely on vCenter.

My considerations on why having only one host on physical store (boot from SAN) is enough in this cluster are as follows:

- The time an ESXi host is in maintenance mode is always relatively short. When performing an update or even reinstalling the host, it will be in maintenance mode for 30min max. Even when performing hardware changes, I think you won’t be in maintenance mode for more than one hour.

- Actually, with the UCS blade profiles being hardware independent, if extensive maintenance would have to be performed on the physical blade, I would probably first switch blades. I would give another blade the role of first ESXi host.

- When the first ESXi host is in maintenance mode and the second ESXi host would fail, VMware HA will pick up the failure of that host and bring vCenter and SQL online again.

With the above points I conclude that the maximum downtime of the first ESXi host is very low. A maximum of one hour. Since we all know mister Murphy visits you when you least expect it, it could still happen that the whole cluster goes down when the first host in maintenance mode. Should that happen, recovery could be done in the following ways:

- When first ESXi was in maintenace mode because of updates, you could just restart it again and reregister the vCenter and SQL VM to the host.

- When first ESXi was in maintenance mode because the blade was unavailable, a different blade can be assigned the profile of the first host and boot as if it is the first ESXi host.

- If the failure that hits the site at the most unfavorable time has a bigger impact and makes my blade center unavailable, I would have lost access to my SAN too, so my only recovery is VMware SRM.

The chances of this all happening are small enough to NOT buy an extra vCenter license for a management cluster. Also this cluster would have to be in different rack, different blade center and on different SAN to offer bigger resilience. The extra costs are too big for just that little extra safety.

This is great info Gabe, really cool to see auto deploy in a real environment. I hear you on the host profiles – I had an install of 24 blades that needed profiles. It’s not fun to have to apply the profile, wait for the screen to build, and then click finish for each host individually. I thought about PowerCLI, but ended up sliding in other activities in between the finish button clicks.

thanks for sharing your experiences, really helpful information! I’d like to know if you’re using a separate standard vSwitch for the Network Dump collector since vDS are not supported according to http://kb.vmware.com/kb/2000781

I didn’t know about that KB. Now I’m starting to doubt if the Network dump setting I used is really in affect on the hosts.

maybe you should force a kernel panic (vsish -e set /reliability/crashMe/Panic) to test if Dump Collector is actually working or not? If the customer allows you to do this is another question of course. ;)

Thanks for sharing this info. Always good to get some real life experience regarding what works and not.

You say that the vCenter is always on the first blade. What if you do a maintenance of the first blade? You have to vMotion vCenter away. And then you end up with not very resilient set up. I think management cluster with statefull booting is a must for autodeploy.

Thanks for your question, I replied in the blogpost under the “Update” Section

I got a question after building my auto deploy lab in preparation for our production environment:

What if you use something like Trend Micro Deep Security? There needs to be an Trend Micro appliance on every host. How can I achieve this with auto deploy, as the servers come up clean ofter each reboot?

Anyone who has an idea, please let me know.

Great post !

Great Post !! I have a question, I have setup the environment in my Lab and once the Hosts get added to vcenter, the host profile doesnt get applied automatically as it has questions relating vmkernel mac, IP, subnet etc. And the answer template holds good for the 1st esxi host that I apply and I dont want to use the same template for others .. Is there a way where I can direct the answer file to a XML file for the ESXi hosts with reference to their mgmt IP address ???