Customer of mine wanted to move their VMware environment into the hosting environment we as Open Line offer to our customers. The initial plan was to shutdown all their systems, then physically move their storage and ESXi hosts to our site and start it again. After all systems would be running again, we would then migrate the VMs into our hosting environment.

Unfortunately a health check of their storage learned that there had been quite some issues with this storage in all those years. Severall SPS (Standby Power Supply) failures, disk failures, etc, etc. Performance was bad at that time and all in all these where enough warning signs for us to decide to not touch the storage and certainly not move it.

In a brainwave moment I thought that maybe Veeam Backup & Replication v6 could help us here. Many times I’ve been reading about the replication feature but never really used it in production but to my knowledge this would be a migration that could be done with Veeam v6 Backup & Replication.

We drew the following plan:

- On customer site make full backups weeks before migrating

- Import those backups into our site and restore the VMs into our vCenter

- Daily replicate changes from customer site to our site, using the restored VMs at our site as “seeder” VMs

- During migration weekend shutdown VMs at customer site, perform a last sync and then power on the VMs at our site.

Note: Their VM VLAN was completely separate from other servers and client systems, so it was very easy to recreate the VLAN with same IP range at our site.

The design

First I started with a one-proxy design. The Veeam Backup & Replication Server at the customer site would also be proxy and on our site we would also install a proxy VM. This was a simple Windows 2008R2 64bit VM with 4 cpu, 4GB RAM, two networkcards and no other software installed. Later on we added an extra proxy at both sites. Again basic Windows install with 4CPU, 4GB RAM.

Those little things you run into

Of course a migration seldom goes as smooth as you had planned and this migration is no different from others.

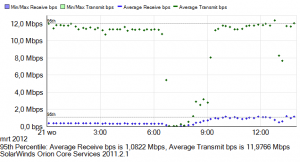

One important issue we had to fight was network throughput between customer site and our site. This is a 10Mbit WAN connection which would be completely filled when replication started. Since they also performed nightly file level and application level backups into our datacenter using EMC Avamar (we offer Backup As A Service ), there was quite some ‘fight’ about line capacity at night.

When looking at the jobs after the syncs had been running for some days, I noticed that backup speeds could differ very much. One day a VM would be replicated very fast, the other day it took almost twice as long.

For example:

| Day | Changes to be written | Duration |

| 1 | 2.7GB | 1h40 |

| 2 | 2.2GB | 1h07 |

| 3 | 1.9GB | 2h38 |

| 4 | 2.4GB | 1h00 |

This made planning all those different replications very difficult because you want them to run very close after eachother but not too many at the same time. It is possible to add multiple VMs into one job, which makes them run immediately after eachother, but I still want to have the opportunity to quickly start replication of a single VM, which is not possible if multiple VMs are combined into one job.

Adding a second proxy on both sites didn’t really help because network bandwith was still saturated and it also became obvious that storage IOPS were going to be a bottleneck as well.

Eventually I decided to pick the four VMs with the biggest change rate (each over 3GB change per day) and run them every 2 hours. This was the only way lighten the burden at night. Unfortunately, the first time the jobs ran they would not fit in my 2hr window and started “moving” to later times and after the 3rd replication they all four started at the same time which was killing for the performance and I had to stop the jobs.

Check the VM health before you start

Some VMs turned out to still have older snapshots running, even some that didn’t show in the VMware vSphere Client snapshot manager. Make sure you remove or commit those before starting replication.

Two VMs had diskeeper installed which defragmented the VMs daily. This causes a LOT of blocks to be changed and therefore very much unneccessary data transfer. Remove or disable diskeeper or any other defrag tool.

Inside the VM it is also worth checking if you can do some optimizations before you start replicating. For example this customer had a test database on his SQL server that was only 20MB in size but since full logging was enabled and this database had never been backed-up, the transaction logs had never been committed and had grown to 15GB !!!

Using “seeder” VMs

When Veeam Backup & Replication v6 was released I read and hear a lot about how you could use “seeder” VMs when doing replication over WAN. This seeder VM will be at the target site and when you start replicating you don’t need to replicate blocks that are already at the target site in the seeder VM. As it sometimes happens, you think you know how it works without really reading the manual, which also sometimes presents you with a little surprise. So what went wrong?

Well, I thought I could use any VM as a seeder and that Veeam would than combine the blocks from the source VM and the seeder VM into a new VM, the target VM. Now that isn’t quite right. How it really works is that Veeam creates a snapshot ON the seeder VM. The snapshot will hold the differences between seeder and source VM. No new VM is created and in essence you can’t use the seeder for multiple VMs to be replicated.

This is what made us change the original plan, which was to NOT restore any backups but just start replication and hope that a default Windows VM would offer enough identical blocks to be a good seeder.

Since the customer also used our Avamar Backup-as-a-Service we decided to take a different approach and do a full VM restore into our datacenter, where the backup nodes are running too . Why not use Veeam for this? Because the full restore by Avamar would reuse the blocks we already have on our site because of the file level backup. Why not stay with Avamar then? Because Avamar can’t replicate but only backup and restore. So we could have done a full image backup but the restore times for all those images which also had to be in sync, would cause too much downtime for the customer. We needed a way to quickly switch between customer and our site. Veeam was the best solution in this.

Eventually we restored the Avamar images into our datacenter, left them powered off (of course) and then I created a number of replication jobs. I created two jobs containing about 6 small VMs each that had a very low change rate and weren’t too big in size either. Per VM I connected a “replication map” VM, selected the proxies and started replicating.

Scheduling

It took me about a week to get a good schedule that would process all the VMs in the most efficient time frame. The first few runs also gave some issues with a number of VMs having active snapshots causing CBT to not be enabled on the VM. I had two VMs of which one or more disks had some corruption at Windows level and cause processing to fail. Luckily a chkdsk in Windows could solve it.

About a number of VMs I was quite surprised about the amount of data that had to be transferred daily. For example both domain controllers had about 800MB data transfer daily, their Exchange server sometimes had 10GB change. Don’t underestimate the change rate of your servers. The change rate of the domain controllers was tracked back to a daily scheduled job that made a NTBackup of the AD to the d-drive.

I also lost some time when trying to work with two proxies to speed things up. Each proxy with 4vCPU is capable of handling 2 jobs at the same time. Unfortunately, the proxies (or one proxy at the beginning) wasn’t the issue. Network bandwith between the sites was and IOPS perfomance of the storage. Adding a second proxy, thinking I could start four jobs at the same time turned out to be a mistake. It almost brought storage to a halt and replication took almost double the time when only two jobs ran at the same time. So back to the scheduling board.

Eventually I had the most constant scheduling when having two proxies, which could potentially handle four jobs, but I would only schedule two jobs to run at the same time. I set all jobs to run at the full hour after each other. Normally they would finish within an hour and if for some reason a job would run longer, it won’t mess up the schedule of the next job.

Their biggest VM of 1,5TB kept giving issues when trying to replicate it. Even browsing the datastore the VM was on, was sometimes impossible and would time-out in the vSphere Client. As an extra precaution, we decided to add a Synology NAS on the customer site and do a Veeam replication to that NAS. Even that gave errors and suddenly all problems were gone. Turned out that after vmotioning the VM to a different ESXi host, all went fine. Still, since it was two days before the big day, we decided to use the NAS for the

The big day

Finally, the day of the migration arrived. At 5pm we shut down all VMs at the customer site, except of course for Veeam VMs and vCenter and the domain controllers. We also stopped replication on all domain controllers on two other remote sites, to prevent replication errors when having to fall back in case something went wrong.

After all VMs where down, we started replication to our site. VMs that previously had NOT been quiesced during replication (to save time and get less errors), would now be synced correctly again. Replication went much faster compared to running VMs and instead of using the pre-defined schedule we switched to manually starting jobs. This saved us a few hours in the end. At 10pm all replications for the small VMs had finished and only two big VMs were left. We used VMware cloning to copy the big 1,5TB VM to the Synology NAS. Then started the replication to our site of the last VM. At 6am both jobs had finished and at 7am colleague drove to the customer site to pick up the NAS.

While the NAS was being transported, another colleague performed the network switch where IP routing was changed and the Citrix CAG was configured to work with different IP addresses.

At 7am we also started booting the VMs on our site and at 9am we had finished our initial testing to proof that the environment was up and running without errors from our point of view. After the NAS had arrived it was mounted on our vSphere environment and then we powered on the 1,5TB VM while still on the NAS. We did this because the customer had the requirement to test on Saturday and be finished at 3pm. Doing a little math we figured that copying the VM to our storage would never be done in time for the customer to test. So we decided to run it from NAS, have the customer perform their application test and then perform a storage VMotion which could then run from 3pm to Monday morning if needed.

Needles to say, the testing by the customer went very well and after a few hours they declared the migration complete and we could start the storage vmotion of the big VM, which eventually late Saturday night.

My Veeam wishlist

Working very intensively with Veeam Backup & Replication v6 in preparation of the migration also brought a list of wishes I have to further improve Veeam.

When setting replication jobs to run every x hours for example, you can’t set a start time. Especially when trying to NOT run severall jobs at the same time this is cumbersome because you have to plan the moment you create the job since that will be the time the job is scheduled.

Opening a job to change settings took 1:30min on average. Not sure why this is so slow, I doubte it is because I was using SQL Express as database. But this is really annoying.

When creating a replication job, the wizard will first let you select the source VM. It shows you the vCenter Servers from both sites and then you have to browse down through the cluster to the source VM or search both environments for the VM. This takes quite some time for a normal size cluster. When continuing the wizard and you have to select the target cluster, datastore and VM it again takes quite some time. Especially in our hosting environment which is quite large. Now I do understand that it can take some time to read the data from vCenter and you want to be up to date. It would be great though if there was some sort of caching and a checkbox could make you select to use real life date or 1hr old data. (There already is a little refresh button in that selection screen).

When replicating to a VM, Veeam will create and keep that snapshot. Especially the part that the snapshot remains caught me off guard. My datastore was filling quicker than expected. Now I do understand why Veeam does this and it is great that in this way you can use you replication to go 1, 2, 3 or more replica’s back in time. But I would also love the choice of having no snapshots. In the wizard 1 is the smallest number of snapshots you can select or for extra safety during replication have a snapshot but clear it when the replication job has finished.

For planning of the jobs I really needed easy access to an overview that shows the amount of data transferred and time taken for all jobs over the past few days. There is no view or report that gives this info, but there is a great SQL View in the SQL Database that gives all the info you want. (See: http://forums.veeam.com/viewtopic.php?f=2&t=11035&p=48756#p48765 ). I hope this view can be made available in the reports section.

Conclusion

Using Veeam for this migration made it possible for us to switch this customer to a new datacenter with only a few hours downtime. Best part is that everything is done in a controlled way. You can pre-test the migration by just powering on the replicated VMs (make sure you have a separate VLAN for this) and see if they boot properly. Some aspects take some better understanding of the product and READING THE MANUAL but overall Veeam Backup & Replication is very easy to use and is a great help during migration scenarios.

Done the same for a SAN migration on the same site, and a month later a move of the headquarter form site A to B. straitforard solution !

I strongly agree. Veeam have made admin’s life a lot easier :) , its very good in disaster recovery case, backup vm’s, or migration. Veeam fits in all

Regard’s

Syed Jahanzaib