If you want to study for your VCP or VCDX exams, you really need a lab in which you can test what the study material is talking about. Hopefully you have a test environment at work that you can use, but if not, this blog post will help you put together your own homelab.

Before planning a lab, you first have to know what you want to do with it to be able to write down your requirements. Will this lab be a short term lab that you are only going to use for the VCP or VCDX exam prep and will you not be using it much afterwards or are you going to use the lab also for hosting some VMs that you use at home and will you be using it to test new products and maybe even competing hypervisors?

Running ESX inside a VM

For the short term lab that will be used for walking through installs and making you familiar with the vCenter and vSphere wizards and screens, you can run it all on one computer. The advantage of a short term lab is that you can create one with just one computer. All you need is Linux, Windows or Mac OSX and VMware Player, VMware Workstation or VMware Fusion. There are two requirements that must be met:

– 64bit system and Intel VT or AMD-V enabled in the bios

– Enough RAM to run at least 4 VMs concurrently.

– Because you’ll probably need more than 3GB RAM, your host OS has to be 64bit

What servers should your lab be able to host?

To work with ESX you would of course need at least one ESX VM, but since you will probably be testing more than just an ESX installation, you will need at least two ESX VMs, vCenter Server and a VM running a shared storage appliance like OpenFiler or FreeNAS for example. With this setup you can play with VMotions, HA, DRS, iSCSI, NFS, templates, cloning, Alarms, etc, etc. Actually you can do almost everything you could do in a production environment, just don’t expect any performance and the number of VMs on ESX is low, but it will get the job done. Instructions on how to install ESX(i) on VMware Workstation 6.x can be easily found using google. And VMware Workstation 7 supports the creation of special ESX VMs so you don’t have to do all the magic tricks to get vSphere running in a VM.

How much RAM is enough?

During install ESX checks the memory requirement of 2 GB, which means that when installing ESX inside a VM, you will have to assign 2GB RAM to that VM. After installation has finished however, you can lower the RAM of the VM by changing the RequiredMemory value in ESX or add the line /vmkernel/minMemoryCheck = “false” in ESXi.

For exact instructions see: Yellow-Bricks.com for ESX and search the comments for Mark Weaver’s tip on how to change this for ESXi. Reading the comments you see people bringing down the amount of RAM to 1480MB for the ESX VM.

Running vCenter Server inside a VM should be able with 768MB. Although in a production environment you might want to run vCenter virtual on ESX, it is recommended to create a separate Workstation VM for vCenter and not run it as a VM in the already virtual running ESX. You will need Windows Server (2003 / 2008) and MS SQL Express as database and maybe also install an Active Directory in it. The VM running OpenFiler or FreeNAS can usually run with as little as 256MB RAM, which makes the total of RAM needed for these four VMs around 4GB total, but I’ll bet with some more squeezing you can run it with less RAM, but the more RAM you have the smoother things run.

A bigger and permanent lab

If you decide to go for a bigger home lab that will be used for more than just simple vCenter and ESX testing, you could go for a lab build with white boxes and if possible a NAS for shared storage. When buying a pc or a real server to use in your lab, it will be difficult to find a reasonable priced server that is on the VMware HCL of supported systems. You could do a search on eBay for old systems that are still on the HCL but since the requirements for vSphere have changed to at least 64bit and support for AMD-V or Intel-VT, chances are slim that you will find a reasonable priced system. And let’s not forget about the power usage of a “real†server in your home lab.

Whitebox

There is a cheaper solution, a white box. A whitebox is a reasonable priced self build system that is made of components that work with ESX(i) even though they are not on the HCL. The power of the whiteboxes comes from the community support, people telling which system they are running and if ESX works or doesn’t work on it. When you do a search on “whitebox vmware“ you will find a number of sites of people talking about whiteboxes. A few tips you should certainly browse through:

– Building a VMware ESXi 5.0 Whitebox home lab by Michel Stevelmans

– http://ultimatewhitebox.com/

– http://www.vm-help.com/ by Dave Mishchenko

– http://www.ntpro.nl/blog/archives/930-New-ESX-WhiteBox-Asus-V3-P5G45.html by Eric Sloof

– http://www.techhead.co.uk/vmware-esx-whitebox-solutions-an-article-summary by Simon Seagrave

– And my own post: http://www.gabesvirtualworld.com/?p=531

When you decide to get a whitebox it is not that expensive to get at least 8 GB RAM in it and when selecting CPU’s keep in mind the VMware Fault Tolerant feature which would be nice to be able to test in your lab. To give you an idea, my last addition to my home lab was a whitebox with Intel Q9440 CPU, 8GB RAM and 650GB harddisk for around 600 Euro’s.

Shared Storage

Of course there are many ways in which you can get shared storage for your lab. You could get a fully fiber channel SAN but it will be tough keeping it cheap. A better option is to invest in a NAS that can do iSCSI and NFS. In my opinion it is important to be able to play with iSCSI, so that is a feature your NAS should surely have. Most of these NAS-es also have NFS support which can also be used by ESX. A number of NAS devices that are popular right now are:

-Â Â Â Â Â Â Â Â Â Iomega IX2-200 (2 disks, NFS, iSCSI) (!!! On VMware HCL !!!) http://go.iomega.com/en/products/network-storage-desktop/storcenter-network-storage-solution/network-hard-drive-ix2/?partner=4740#tech_specsItem_tab

-         Iomega IX4-200D (4 disks, NFS, iSCSI) (!!! On VMware HCL !!!) http://go.iomega.com/en/products/network-storage-desktop/storcenter-network-storage-solution/network-hard-drive-ix4-200d/#tech_specsItem_tab. To see how this IX4 performs, take a look at my previous post: Putting your storage to the test – Part 1 iSCSI on Iomega IX4-200D

-Â Â Â Â Â Â Â Â Â Qnap TS-509 Pro ( 5 disks, NFS, iSCSI) http://www.qnap.com/pro_detail_hardware.asp?p_id=104

-Â Â Â Â Â Â Â Â Â Drobo also has some nice boxes http://drobo.com/products/index.php

The above mentioned boxes aren’t too expensive, the smallest Iomega IX2-200 with 1 TB storage is available for 249 Euro  and they all give you the opportunity to learn how to use iSCSI and NFS on ESX. Since it is shared storage you can also do VMotions and Storage VMotions and play around with Vmware HA.

If these boxes stretch your budget too much, you can also use a virtual SAN. A virtual SAN or virtual appliance SAN is a VM that can build iSCSI targets on your ESX local harddisks and present them to your ESX hosts. You now have shared storage running on one of your ESX hosts without the need for a NAS. There is one drawback that the ESX host running this appliance will be your single point of failure for your shared storage, but for lab environments this might not be an issue.

Some examples of these appliances:

-Â Â Â Â Â Â Â Â Â StorMagic SvSAN offers a free version that can manage up to 2TB and paid versions that can do storage mirroring. http://www.stormagic.com/SvSAN.php, also see my review on StorMagic here: StorMagic SvSAN with High Availability mirroring

-Â Â Â Â Â Â Â Â Â FalconStor CDP Virtual Appliance (not free) also offers snapshots on SAN level http://www.falconstor.com

-Â Â Â Â Â Â Â Â Â StarWind Software also have a virtual SAN http://www.starwindsoftware.com

-Â Â Â Â Â Â Â Â Â Lefthand VSA is one of the earliest suppliers of a virtual SAN. http://h18000.www1.hp.com/products/storage/software/vsa/index.html 30 day trail.

-Â Â Â Â Â Â Â Â Â The Celerra virtual SAN is only available for EMC partners, see this link for more info: http://virtualgeek.typepad.com/virtual_geek/2008/08/celerra-virtual.html

Networking

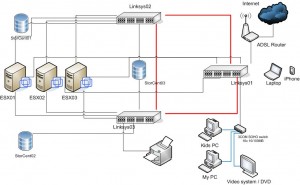

When running your lab inside VMware Workstation, you can run everything in isolated networks. Building a cheap 1 GB network for your whitebox homelab can be done with the Linksys SLM2008. It is an eight port 1 GB switch that supports VLANs, STP, PortFast and Jumbo Frames. Personally I have three of those running in my lab, making it a fault tolerant network….. which proofs I sometimes do get carried away a bit. Below an image from my own lab in which the red lines are the uplinks between the SLM2008 switches that make the network fault tolerant. The image also clearly shows I’m no Visio wiz :-)

Summary

Studying for your VCP / VCDX requires a lab and as you’ve just read, there are several ways to build such a lab. You can go for the almost free option of running everything inside VMware Workstation or build a permanent lab with shared storage for under 1500 Euro. There are many other blog post talking about people’s ESX whitebox or their homelab and most of them are willing to help you out making the right choice.

Great post Gabe. Also the Celerra VSA is available to the general public (not just EMC partners, etc) on Chad's site: http://virtualgeek.typepad.com/virtual_geek/200…

Great post Gabe. Also the Celerra VSA is available to the general public (not just EMC partners, etc) on Chad's site: http://virtualgeek.typepad.com/virtual_geek/200…

Useful post about Homelab for VCP and VCDX http://bit.ly/5bIjA0

The download link for Celerra VSA doesn't seem to work anymore :(

For shared storage, the new QNAP x59 series are on VMware HCL. Having one myself for my home lab, I would recommend the TS-459 (http://www.qnap.com/pro_detail_feature.asp?p_id…).

Cheers,

Didier

Useful post about Homelab for VCP and VCDX http://bit.ly/5bIjA0

The download link for Celerra VSA doesn't seem to work anymore :(

For shared storage, the new QNAP x59 series are on VMware HCL. Having one myself for my home lab, I would recommend the TS-459 (http://www.qnap.com/pro_detail_feature.asp?p_id…).

Cheers,

Didier

I’m tring to find the iSCSI Firmware support for the Iomega StorCenter ix2 Network Storage ( 34385 ) NAS

NAS 2 TB Serial ATA-300 HD 1 TB x 2 RAID 1, JBOD Gigabit Ethernet. Not the IX2-200, can you help me ?

Regards John

Gabe, excellent article and tips. When considering NAS for your home, like QNAP or Thecus that offer iSCSI support, one thing to watch out for is how many simultaneous iSCSI connections do these devices allow. So far, qnap allows 8 I believe, thecus with 5 but that may change in their future firmware or product releases.

I would also like to see these NAS vendors add more than 2 GbE NICs in their devices and faster CPUs to take care of some of the bottlenecks int he setup.

Moreover, I am also in the process of building my home virtualization lab (extending existing lab to accomodate virtualization):

http://www.virtualizationtalk.net/58-building-h…

Thanks

Great website for home lab, I was looking for this information to build one for myself. I will be thankful if some one can shed light on VCDX prepration. I am VCP certified wants to proceed for next VCDX.

Lovely article – one of the best things I’ve recently read, and by far the most useful. I recently had to fill out a form and spent an enormous amount of time trying to find an appropriate You’ll forget about paperwork when you try PDFfiller. CMS-20031 can be filled out in 5-10 mins here

https://goo.gl/vnQvov.