In November 2009 I already did a review on StorMagic’s SvSAN and was then very pleased with the product. Now, three years later I’m again reviewing SvSAN and am anxious to see if in those three years SvSAN has improved.

The Concept

For those not familiar with StorMagic SvSAN I will shortly explain what SvSAN does. With SvSAN you will be able to use the local disks of one or two ESXi hosts and present that local storage as shared storage to your ESXi environment. By mirroring the storage between two ESXi hosts, SvSAN is protected against a single host failure. The following diagram shows how on each ESXi host a SVA (Storage Virtual Appliance) is installed and how the local disks are connected to the SVA and then mirror and presented to the whole vSphere cluster over iSCSI. Should the ESXi host holding the SVA with the primary mirror fail, then the second SVA will present the datastore to the ESXi hosts in the cluster.

SvSAN 5.0 has been certified with vSphere 5.1 and 5.0 hosts, if you’re still on vSphere 4.0 or 4.1 you should use SvSAN 4.5. With that certification comes support for (of course) VMware HA and VMware VMotion, because that is where this was all about in the first place, but now VMware Fault Tolerance is supported as well.

The smallest deployment is an environment with just two ESXi hosts that each run the SvSAN Virtual Appliance and also run VMs on these hosts that use the storage offered by the SvSAN Virtual Appliance (SVA). If needed, more hosts in the environment can connect to the datastores and use them. In my home lab I have five ESXi hosts, two of them offer their local disk through the SvSAN as a datastore, all ESXi hosts are using the datastores offered by SvSAN.

Installation

The installation of SvSAN used to be very simple with version 4. Import the VSA as an OVF to the ESXi host, assign a network config to the VSA, install the vCenter StorMagic plug-in and start configuring your mirrored storage. In version 5, installation is even easier. First step is to install the vCenter Plug-in on the vCenter Server. Next you click on the datacenter and will now see an extra tab named “StorMagic”. From here you can start a wizard to deploy a SVA to an ESXi host.

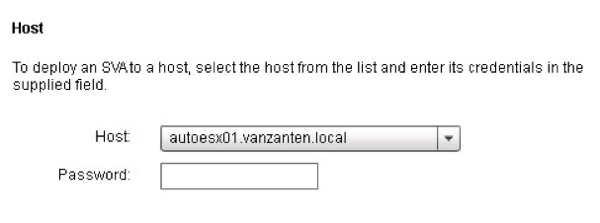

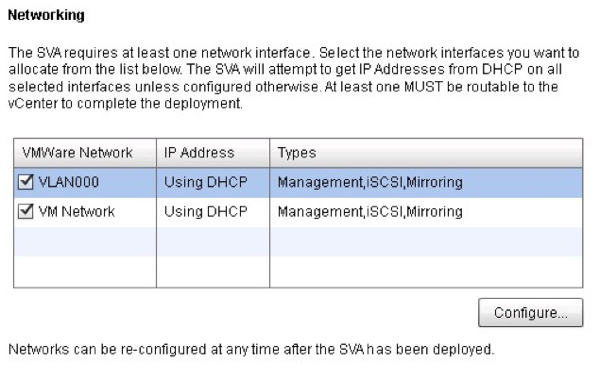

The wizard will automatically deploy the SVA on the ESXi host in just a few steps. First you select the host on which to deploy the SVA and enter the root password. Next select the RDM or existing datastore which you want to present through the SVA. Next select which VMkernel portgroups you want to use for SvSAN. Per portgroup you can choose if it should be used for Management, iSCSI and/or Mirroring. When the wizard has finished you’ll see the OVF being automatically deployed. Just a few minutes later the SVA is running.

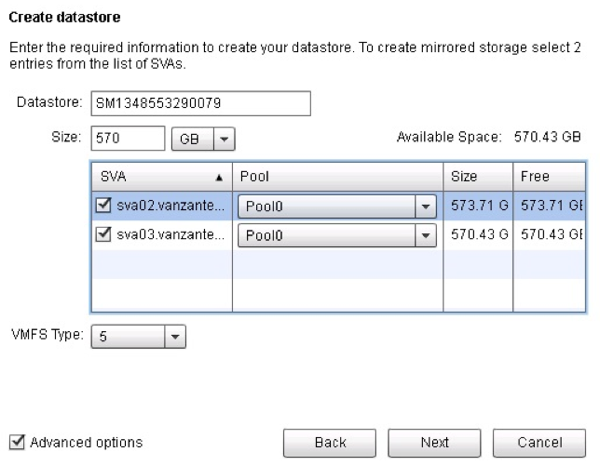

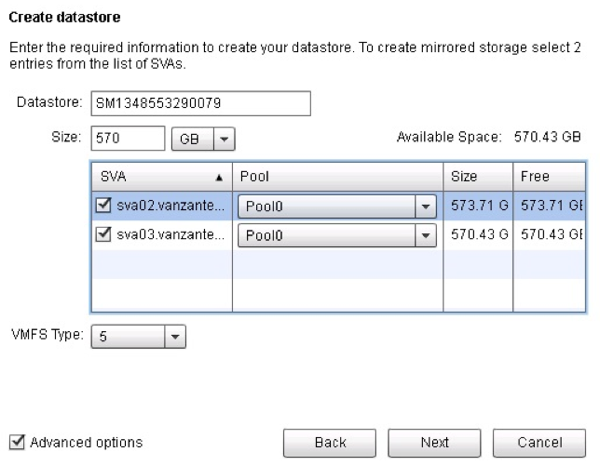

Next wizard is the one that creates a shared datastore. The steps again are very easy, just give the datastore a name and from both SVA’s you’ll see how much space is available and you select how much of that space you want to use for the mirror datastore. Select VMFS5 or VMFS3, select whether or not to use a neutral storage host and you’re done. The mirrored datastore will be created.

What is a neutral storage host?

A neutral storage host is used to avoid a split brain scenario in case there is a network outage. The SVA will use it to determine if it is isolated from the network should the other SVA become unreachable. A neutral storage host is NOT a requirement for a SvSAN deployment. Especially in small branch offices where you only want to deploy two ESXi hosts running SvSAN, it would be too expensive to add a neutral storage host (Windows Server) to the environment if that would be the only function.

Failover

The failover of the datastore works as flawlessly as it did before. I tested it by running iometer on a VM on the datastore presented by SvSAN. The VM was running in memory of a third ESXi host, not running any SVA components. I first ran a few iometer tests without interruption and then ran the same tests again and in the middle I hard shutdown the SVA.

The first test was run without interruption, the second test with interruption:

| Counter |

Test 1 |

Test 2 |

| Total IOs per second: |

143.08 |

119.70 |

| Total MBs per second: |

1.17 |

0.98 |

| Average IO Response time: |

417.6953 ms |

508.2921 ms |

| Maximum IO Response time: |

883.2917 ms |

39038.1664ms |

| %CPU Utilization: |

14.70% |

11.96% |

As you can see there is a Maximum IO Response time of 39038ms (39sec) which happened when I shut down the VSA. In other words, after the first VSA failed, the storage and normal VM function was restored in 39 seconds. Nice result.

Note: The disk performance you see here should not be used to compare performance of the SvSAN with other products since my test setup only contains one 7500 rpm disk per host.

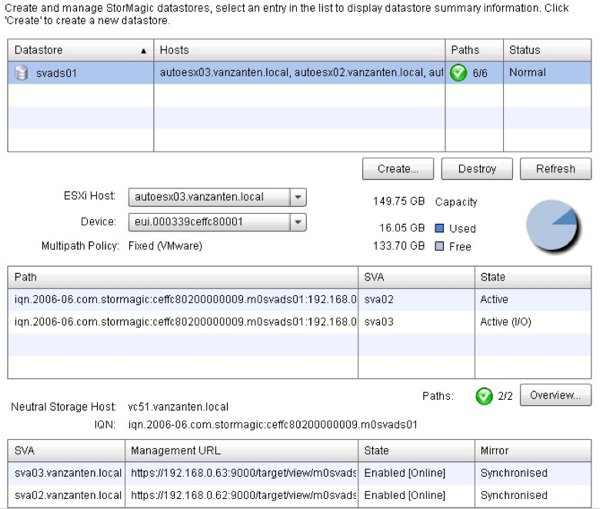

Management

The wizards to help you set up the SvSAN properly already take away a lot of management tasks, but even when there is some extra management needed, the SvSAN is easy to manage. A big improvement has been made in this area. Adding extra hosts to use the datastore is very easy by just selecting extra hosts and then SvSAN will configure the iSCSI connections for you.

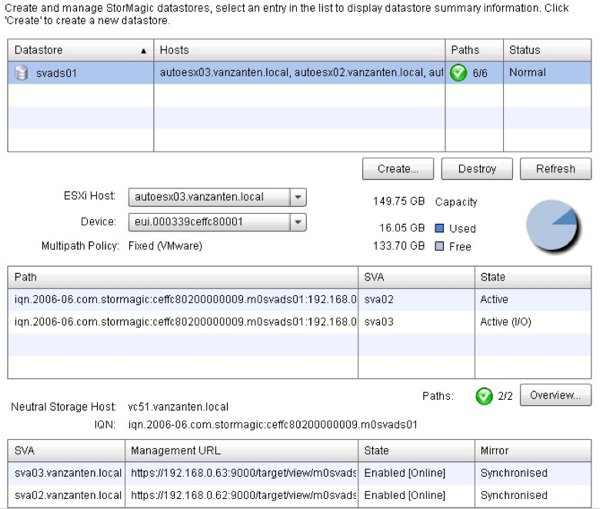

If you want to check the health of the shared datastore, you can get a complete view that shows the connected hosts, the active paths and the paths over which IO is running.

Comparison with VMware VSA

VMware now offers a similar product as Stormagic SvSAN, named the VMware VSA (vSphere Storage Appliance). The concept of the VMware VSA is the same: offering local disks as a datastore for your vSphere environment to enable VMware HA and VMotion features. Where SvSAN uses iSCSI to present the datastore to the ESXi hosts, VMware uses the NFS protocol. This post initially would be a comparison between VMware VSA and StorMagic SvSAN, but the hardware requirements for the VMware VSA are more stringent than the StorMagic requirements and I was unable to get the VMware VSA running in my home lab. See:

http://www.vmware.com/support/vsa/doc/vsphere-storage-appliance-511-release-notes.htmlSummary

With the release of SvSAN 5, StorMagic has proven that deployment and configuration of a cheap SAN solution based on local disks, can be quick and simple. Throughout the testing I often crashed the SVA on purpose and not once was there data corruption or problems with the failover. The remote deployment features that have been added make the SvSAN very easy to deploy in remote offices, a real addition to the previous version of SvSAN. For more info on StorMagic SvSAN visit their website at:

http://www.StorMagic.comSvSAN is available according to the capacity per server, i.e., in 2TB, 4TB, 8TB, 16TB, or unlimited capacity in each server, capacities can be mixed.

Nice post. Question – do you know if there is some sort of migration path if you already have production vms on the local storage or do you need to migrate them somewhere and start from scratch?

There is no automatic migration path. You will have to do some planning and the best way would be to have at least one empty disk to which you can move your VMs. Say you have two ESXi hosts, each with local disk about equal in size, you could empty one disk, add it to the first SVA, bring up that local disk as iSCSI storage, move the vms from second host onto the iSCSI storage and then add the second SVA to second host and build your mirror.

You can do a staggered deployment. IE migrate VMs over to one machine while the other is built up. Migrate back over to new Machine with SvSAN on while the the same is done to the other. Once both are up and running, activate mirroring and you are good to go

Ha, just seen that you have answered the question after I posted my response. lol

You could also migrate all your VMs onto one host, install the SVA on the empty host which would allow you to take advantage of all the storage in the host, maybe re-provision the storage if necessary. Create an iSCSI target on the newly installed SVA, share it to both hosts and migrate the VMs to the new target and the other host. Perform the same action on the last server and convert the iSCSI target to a mirror.